Attention

This website is best viewed in portrait mode.

-

industries

-

Automotive

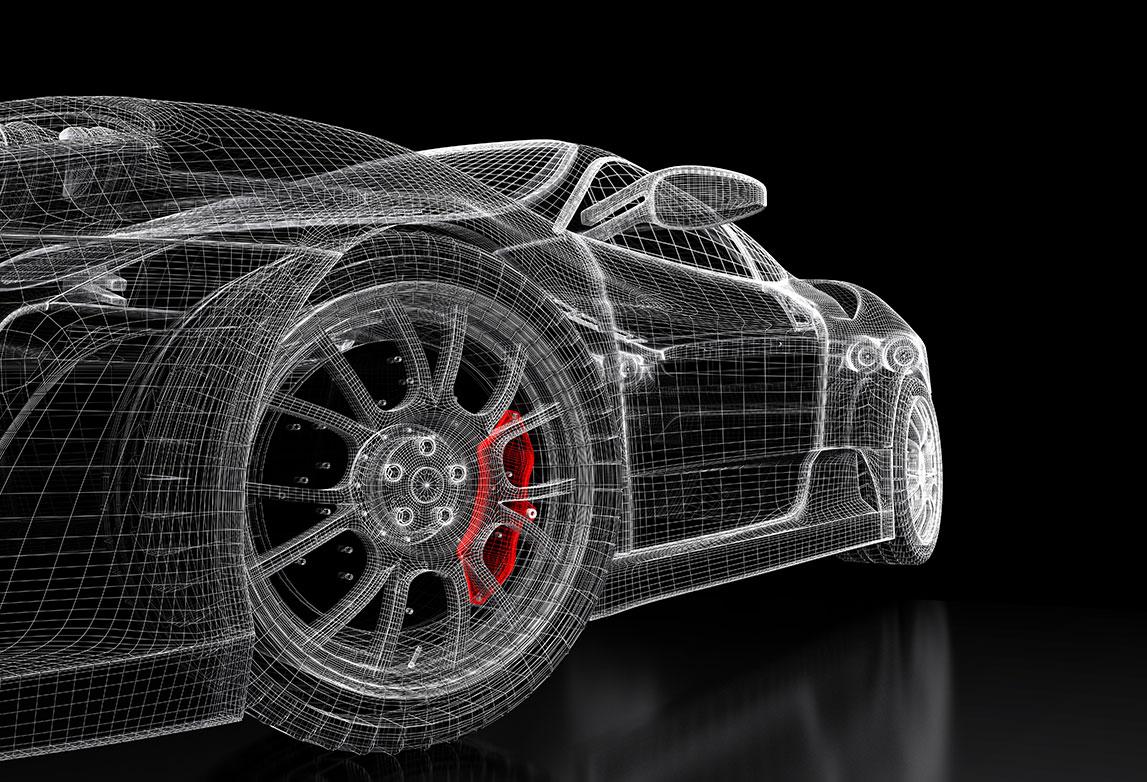

Automotive Engineering Services

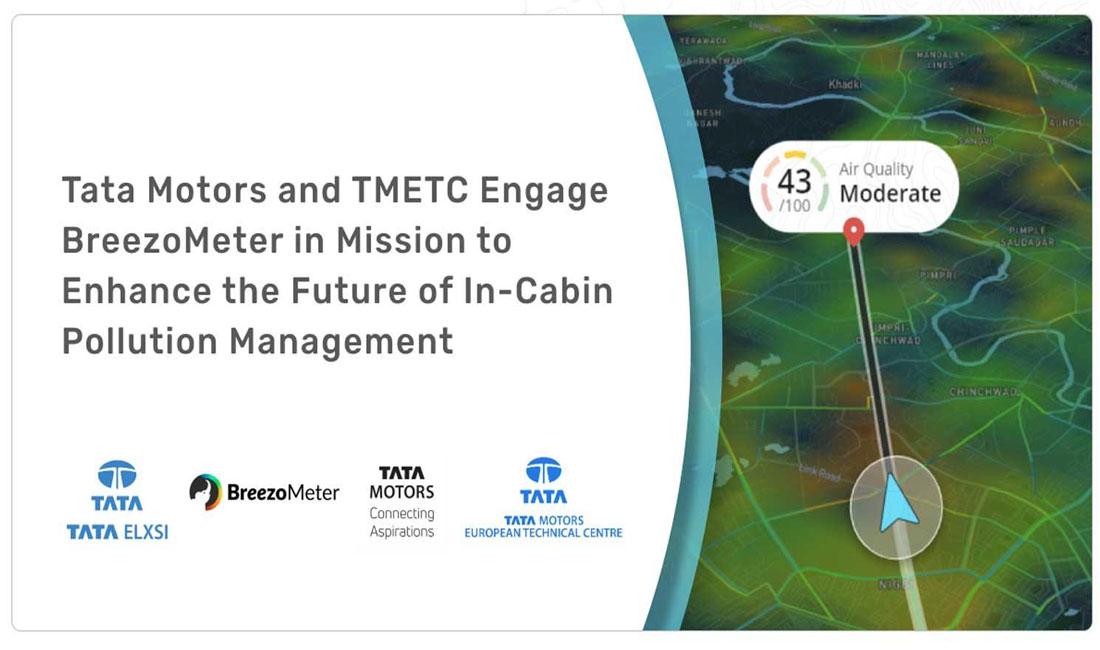

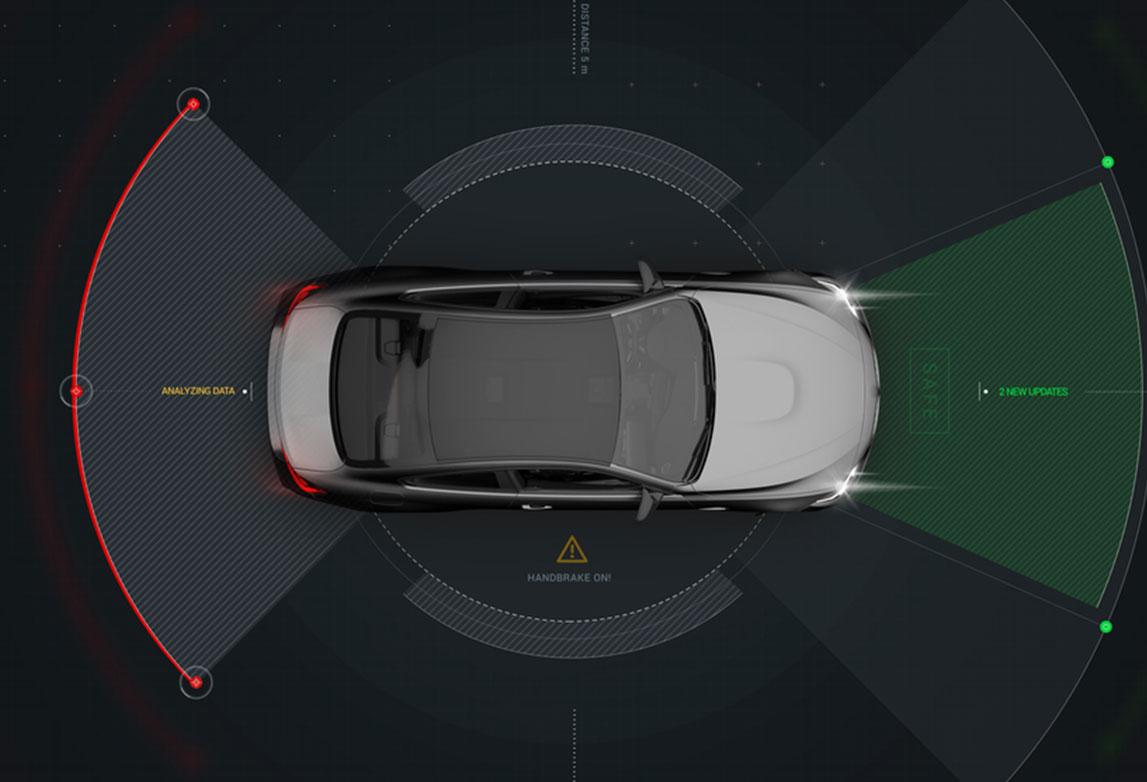

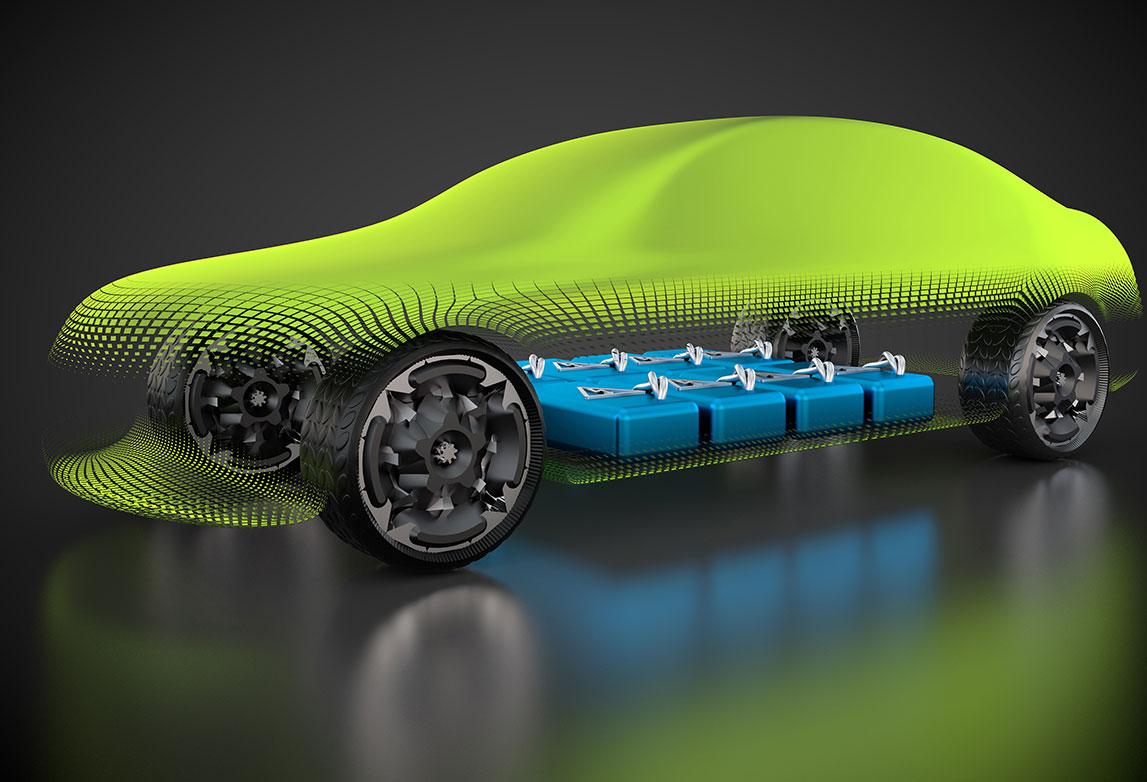

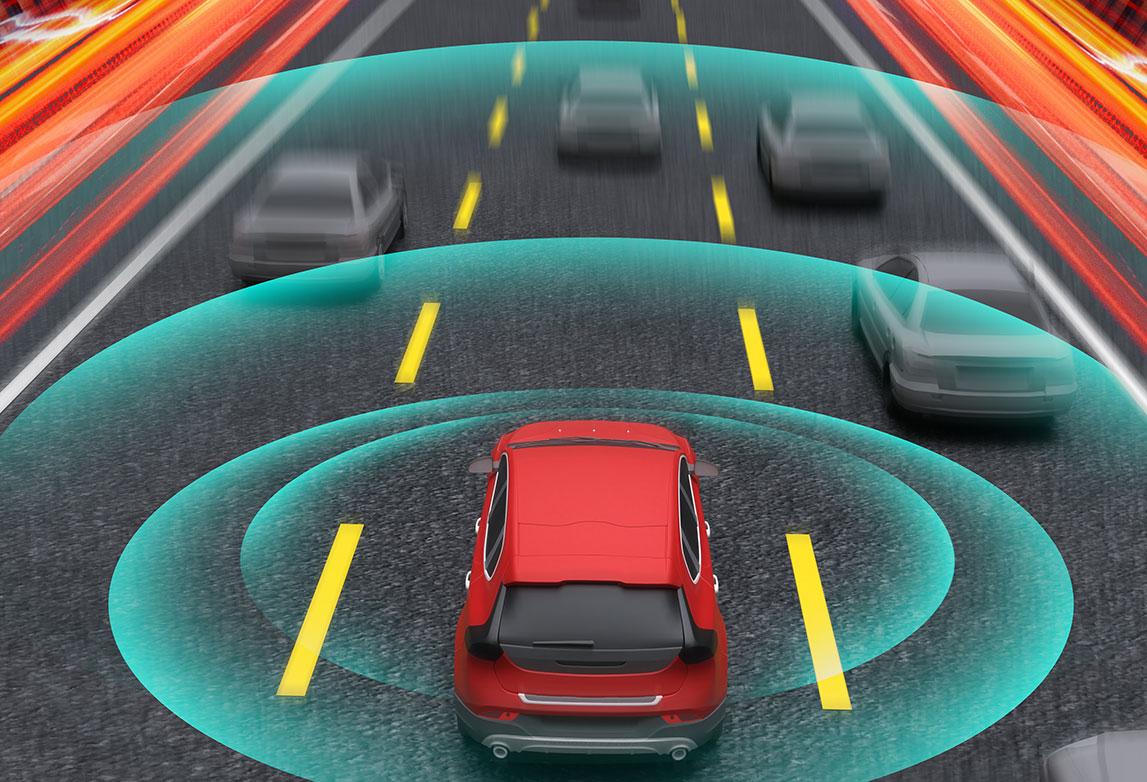

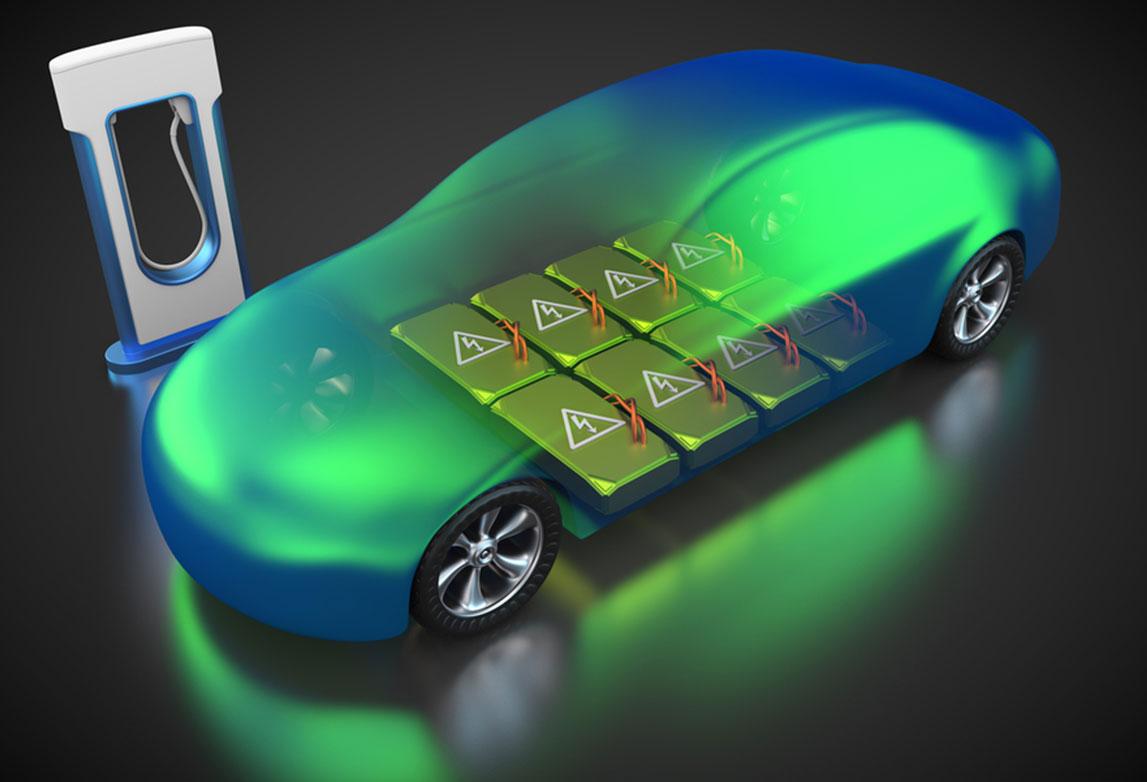

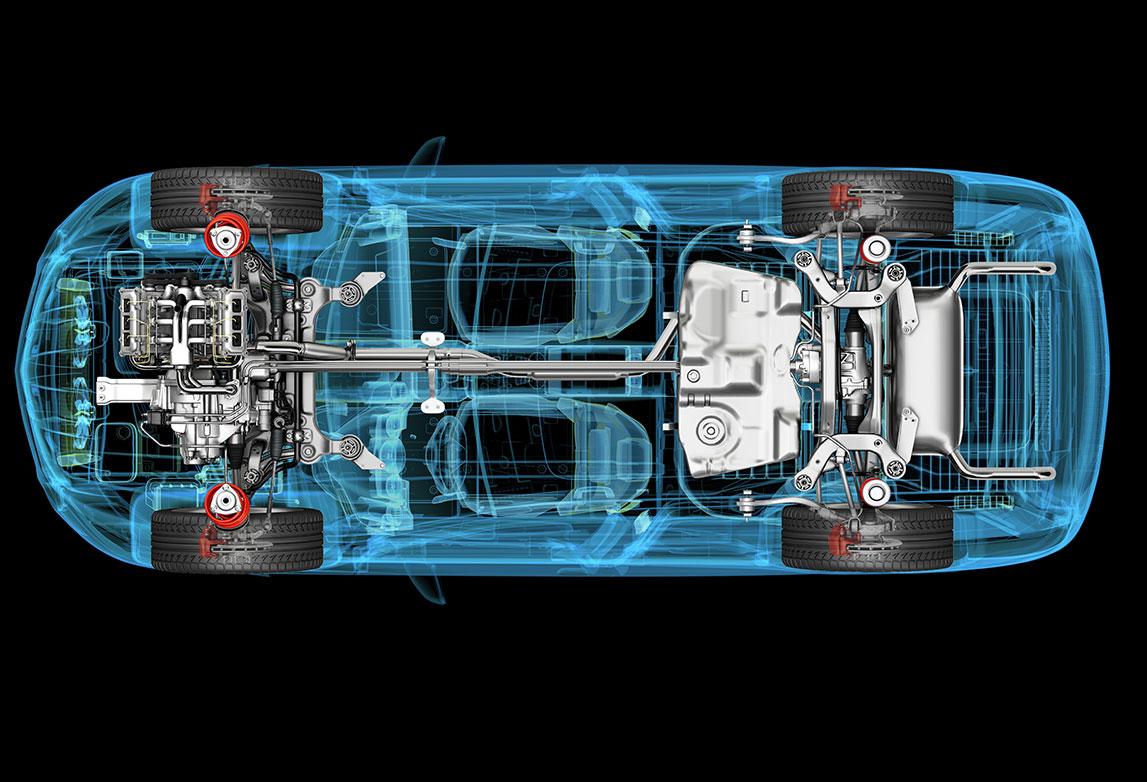

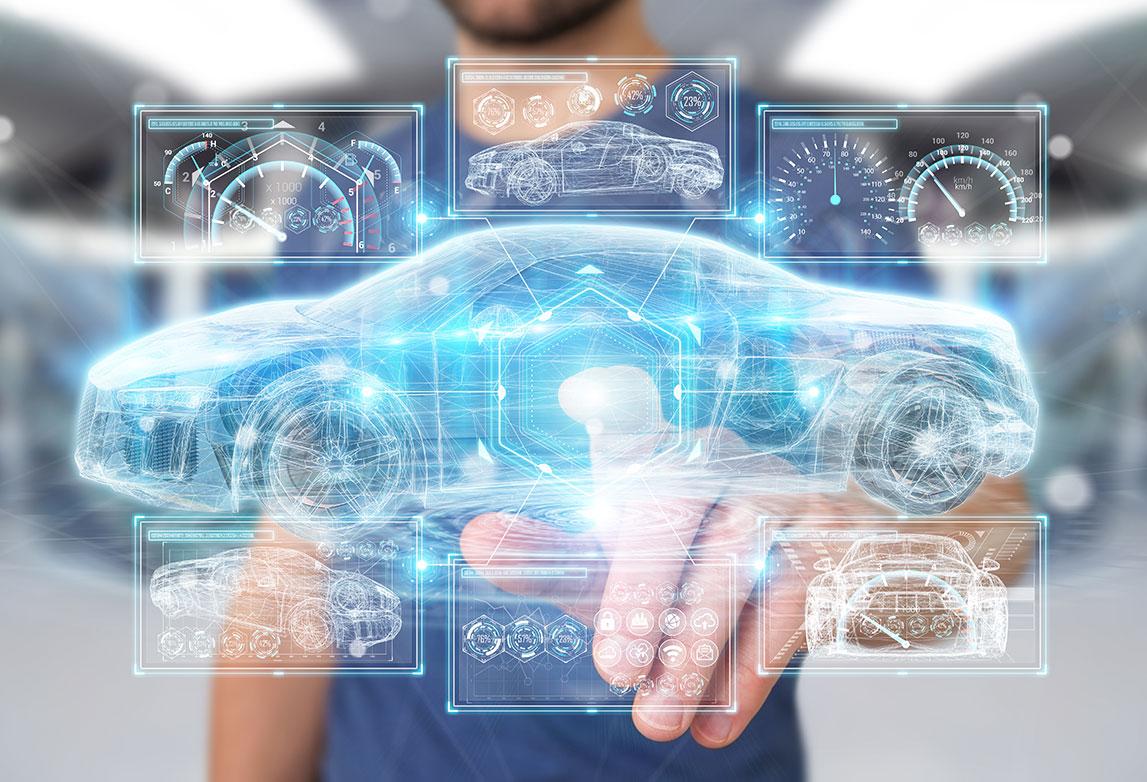

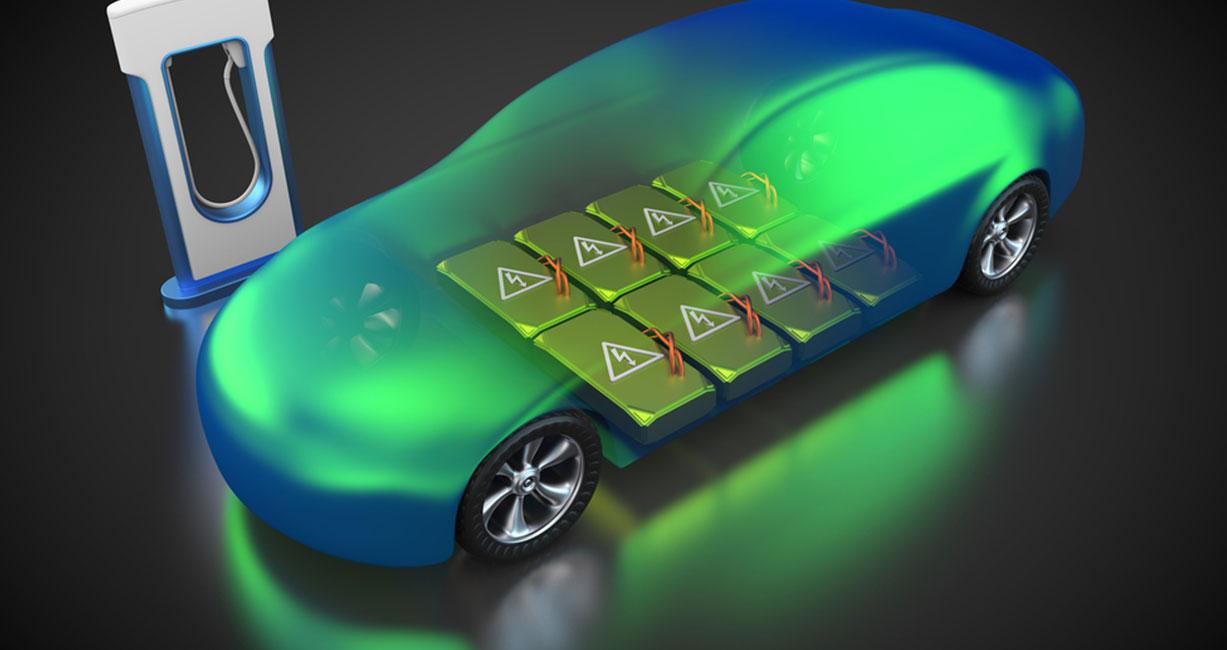

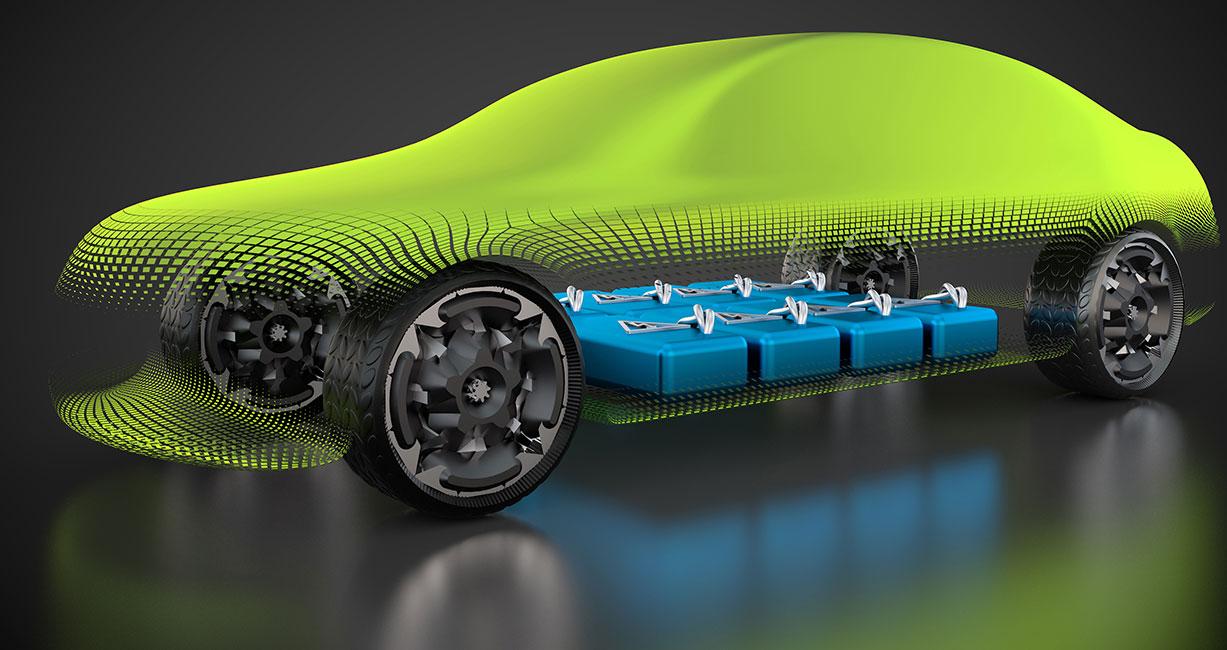

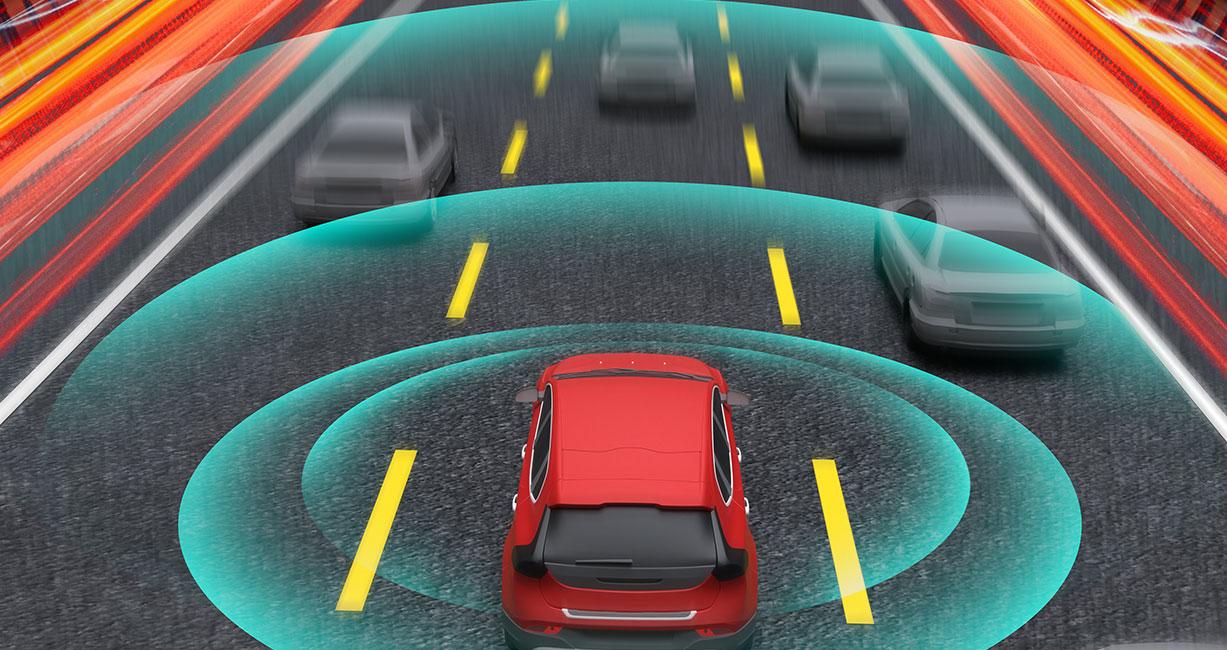

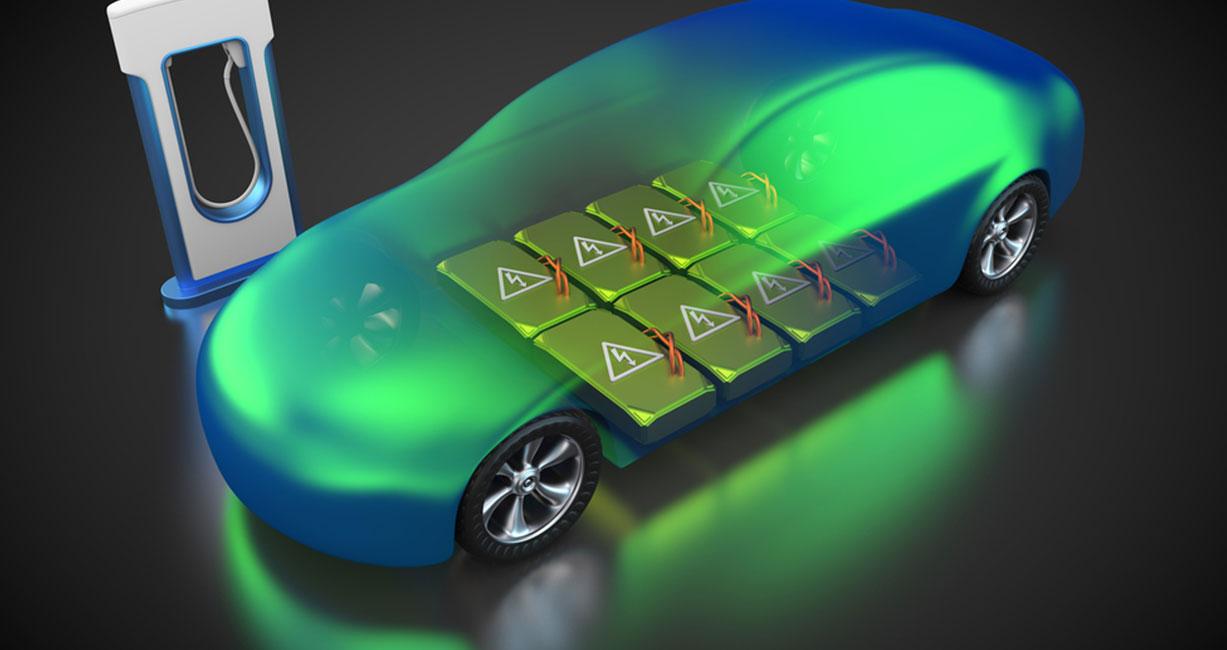

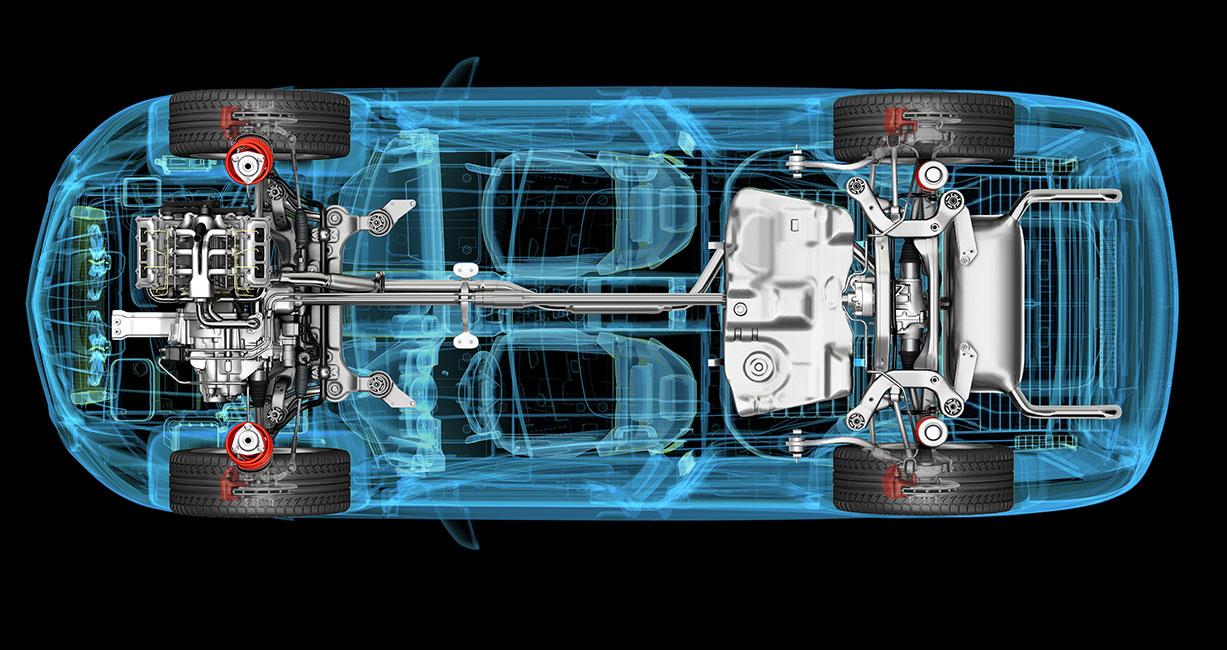

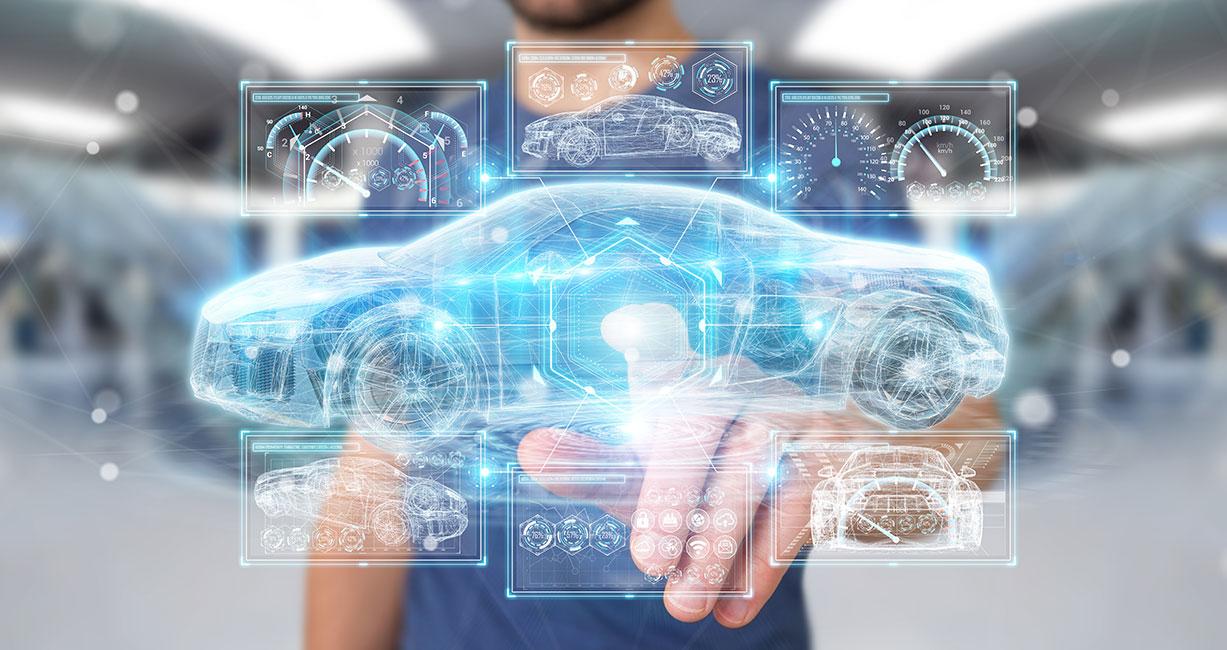

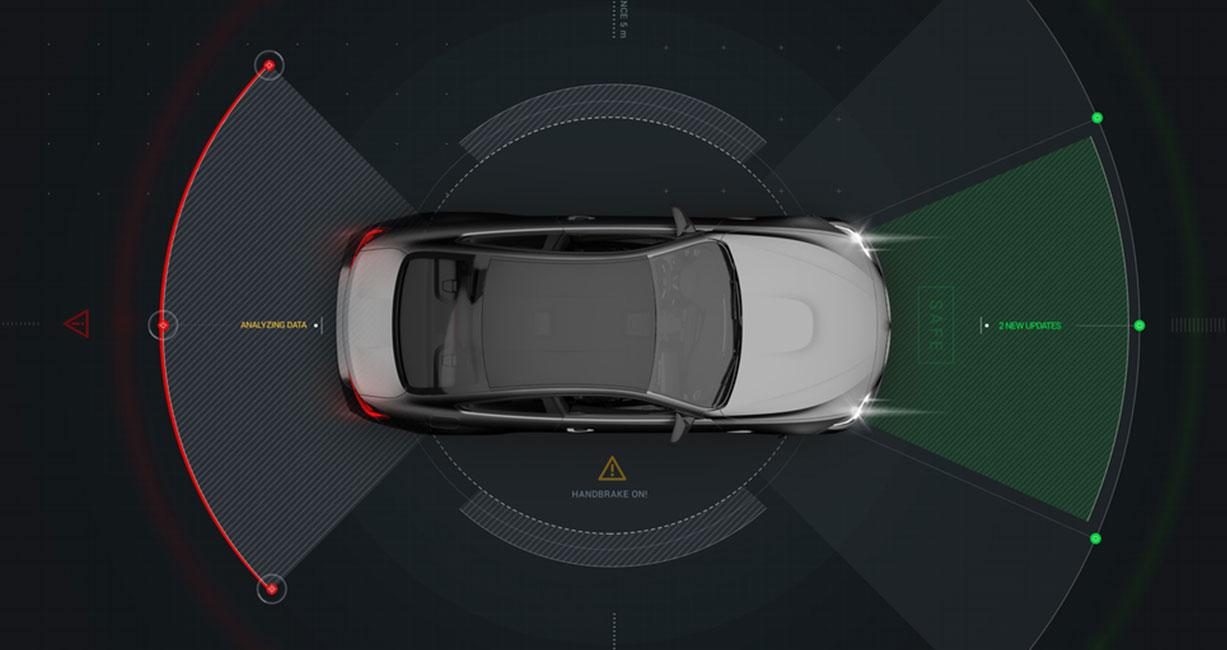

With the world's rapid digitalization, the automotive industry is in a massive transformation into the future. Software is the new oil, and all automotive industry players are reconsidering subsystem ownership. With our wide array of automotive engineering services and solutions, as well as our experience working with automotive OEMs and suppliers, we're ready to help you spin the wheel toward future mobility.

Automotive manufacturers and suppliers are looking for partners to help them take advantage of new growth opportunities presented by emerging technologies such as autonomous driving, electrification, and connectivity. Tata Elxsi's services for automotive engineering and design, along with products, and solution accelerators can help you keep up with market expectations as they evolve and add value at every stage of your product development lifecycle

Products & Services

C.A.S.E, Passenger Experience, Vehicle Systems, Automotive Software Engineering, eMobility HILS, TESA - Smart Annotation

The Road Ahead

Product & Service launches, Conferences, and Media updates

Subscribe to Elxsimotive Newsletter on LinkedIn

Insights & Experiences

Technical Whitepaper

Temperature-Driven Capacity Fade in Lithium-Ion Batteries - A Study on Cycle and Calendar Aging

Blog

Automotive System Requirement Generation using Retrieval Augmented Generation (RAG) and Generative AI

Technical Whitepaper

Software-Defined Vehicles: Combining Real-Time Safety-Critical Functions with Cloud Connectivity

Case Study

Infotainment Software Development Partner For A Global Tier-1 With Module Delivery Ownerships

Subscribe

To subscribe to the latest updates & newsletter